Ah, ok, yeah seems very custom. I guess it must predate Ingress.

No problem, good luck!

(Justin)

Tech nerd from Sweden

Ah, ok, yeah seems very custom. I guess it must predate Ingress.

No problem, good luck!

Ah, but your dns discovery and fail over isn’t using the built-in kubernetes Services? Is the nginx using Ingress-nginx or is it custom?

I would definitely look into Ingress or api-gateway, as these are two standards that the kubernetes developers are promoting for reverse proxies. Ingress is older and has more features for things like authentication, but API Gateway is more portable. Both APIs are implemented by a number of implementations, like Nginx, Traefik, Istio, and Project Contour.

It may also be worth creating a second Kubernetes cluster if you’re going to be migrating all the services. Flannel is quite old, and there are newer CNIs like Cilium that offer a lot more features like ebpf, ipv6, Wireguard, tracing, etc. (Cilium’s implementation of the Gateway API is bugger than other implementations though) Cillium is shaping up to be the new standard networking plugin for Kubernetes, and even Red Hat and AWS are starting to adopt it over their proprietary CNIs.

If you guys are in Europe and are looking for consultants, I freelance, and my employer also has a lot of Kubernetes consulting expertise.

ah ok

Ah, interesting. What kind of customization are you using CoreDNS for? If you don’t have Ingress/Gateway API for your HTTP traffic, Traefik is likely a good option for adopting it.

All containers in a pod share an IP, so you can just use localhost: https://www.baeldung.com/ops/kubernetes-pods-sidecar-containers

Between pods, the universal pattern is to add a Service for your pod(s), and just use the name of the service to connect to the pods the Service is tracking. Internally, the Service is a load-balancer, running on top of Kube-Proxy, or Cilium eBPF, and it tracks all the pods that match the correct labels. It also takes advantage of the Kubelet’s health checks to connect/disconnect dying pods. Kubedns/coredns resolves DNS names for all of the Services in the cluster, so you never have to use raw IP addresses in Kubernetes.

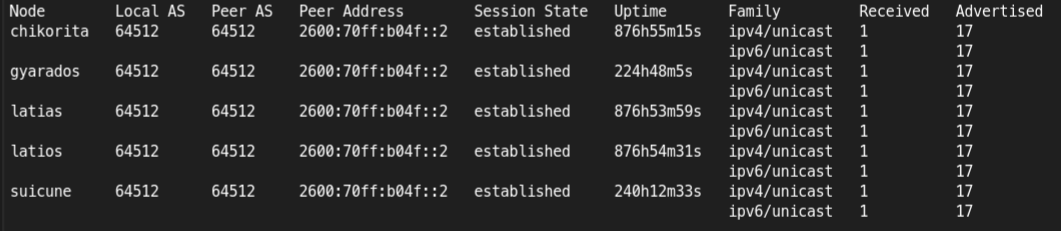

Go all out and register your container IPs on your router with BGP 😁

(This comment was sent over a route my automation created with BGP)

I’ve inherited it on production systems before, automated service discovery and certificate renewal is definitely what admins should have in 2025. I thought the label/annotation system it used on Docker had some ergonomics/documentation issues, but nothing serious.

It feels like it’s more meant for Docker/Podman though. On Kubernetes I use cert-manager and Gateway API+Project Contour. It does seem like Traefik has support for Gateway API too, so it’s probably a good choice for Kubernetes too?

Good debugging!

I’m thinking that it’s best for production to use dynamic IP addresses, to avoid this kind of conflict. In the kubernetes space, all containers must have dynamic IP addresses, which are then tracked by a ebpf load balancer with a (somewhat) static IP.

The nextcloud helm chart is nice

Maybe crowdsec could add a list for blocking scraping for LLMs

Ah, ok I see.

only need dedup if your data is duplicated

Nope, you don’t need any VPS to use it, it comes with an SFTP interface.

https://www.hetzner.com/storage/storage-box/

offsite backup for $2/TB and no download fees, 1/3rd the price of B2.

Hetzner storage box is super cheap and works with rclone. They have a web interface for configuring regular zfs snapshots too so you don’t have to worry about accidental deletions/ransomware.

Hardware-wise:

Software wise, too many projects to count lol

Renovate is a very useful tool for automatically updating containers. It just watches a git repo and automatically updates stuff.

I have it configured to automatically deploy minor updates, and for bigger updates, it opens a pull request and sends me an email.

Yeah full VMs are pretty old school, there are a lot more management options and automation available with containers. Not to mention the compute overhead.

Red Hat doesn’t even recommend businesses to use VMs anymore, and they offer a virtualization tool that runs the VMs inside a container for legacy apps. Its called Openshift Virtualization.

Yeah unraid is the same, it just adds a Gui to make it easier to learn. The downside is that unraid is very non-standard and is basically impossible to back up or manage in source control like vanilla docker or kubernetes

You should keep your docker/kubernetes configuration saved in git, and then have something like rclone take daily backups of all your data to something like a hetzner storage box. That is the setup I have.

My entire kubernetes configuration: https://codeberg.org/jlh/h5b/src/branch/main/argo/custom_applications

My backup cronjob: https://codeberg.org/jlh/h5b/src/branch/main/argo/custom_applications/backups/rclone-velero.yaml

With something like this, your entire setup could crash and burn, and you would still have everything you need to restore safely stored offsite.

Oh definitely, everything in kubernetes can be explained (and implemented) with decades-old technology.

The reason why Kubernetes is so special is that it automates it all in a very standardized way. All the vendors come together and support a single API for management which is very easy to write automation for.

There’s standard, well-documented “wizards” for creating databases, load-balancers, firewalls, WAFs, reverse proxies, etc. And the management for your containers is extremely robust and extensive with features like automated replicas, health checks, self-healing, 10 different kinds of storage drivers, cpu/memory/disk/gpu allocation, and declarative mountable config files. All of that on top of an extremely secure and standardized API.

With regard for eBPF being used for load-balancers, the company who writes that software, Isovalent, is one of the main maintainers of eBPF in the kernel. A lot of it was written just to support their Kubernetes Cilium CNI. It’s used, mainly, so that you can have systems with hundreds or thousands of containers on a single node, each with their own IP address and firewall, etc. IPtables was used for this before. But it started hitting a performance bottleneck for many systems. Everything is automated for you and open-source, so all the ops engineers benefit from the development work of the Isovalent team.

It definitely moves fast, though. I go to kubecon every year, and every year there’s a whole new set of technologies that were written in the last year lol